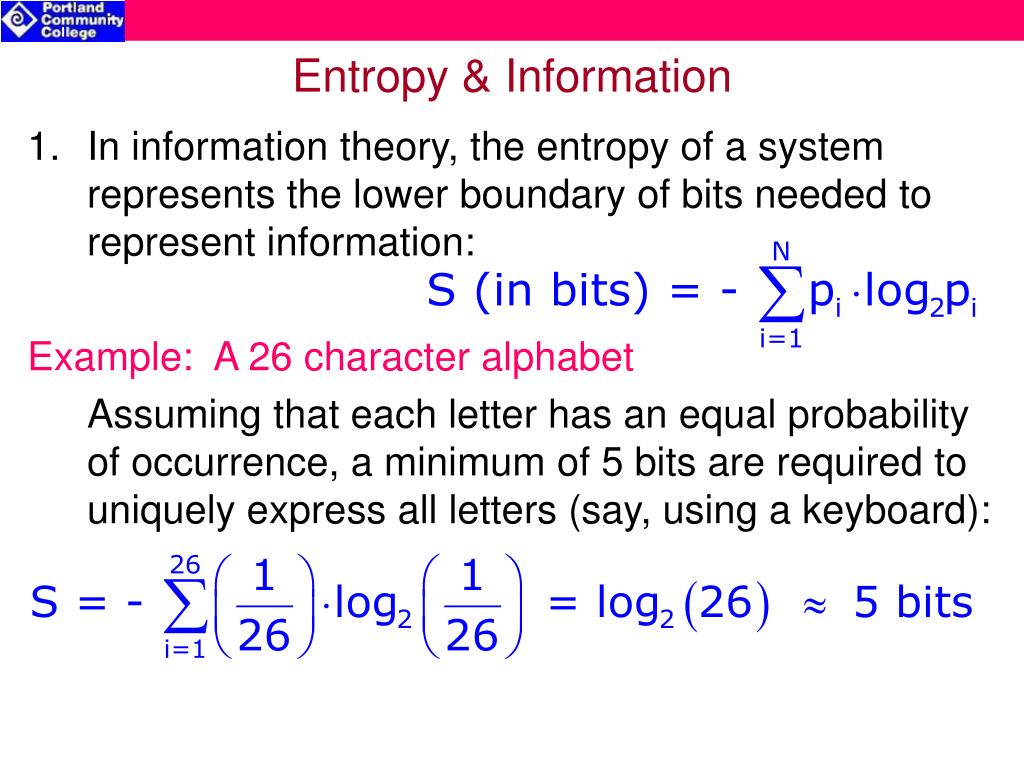

… The author manages to balance the practice with the theory, every chapter is very well structured and has high-value content.” (Nicolae Constantinescu, Zentralblatt MATH, Vol. The main goal is a general development of Shannon’s mathematical theory of communication for single-user systems. “The book offers interesting and very important information about the theory of probabilistic information measures and their application to coding theorems for information sources and noisy channels. … it will contribute to further synergy between the two fields and the deepening of research efforts.” (Ina Fourie, Online Information Review, Vol. … Entropy and Information Theory is highly recommended as essential reading to academics and researchers in the field, especially to engineers interested in the mathematical aspects and mathematicians interested in the engineering applications. “In Entropy and Information Theory Robert Gray offers an excellent text to stimulate research in this field. … this is a deep and important book, which would reward further study as the focus of a reading group or graduate course, and comes enthusiastically recommended.” (Oliver Johnson, Mathematical Reviews, October, 2014) The next variable, P (x i ), represents the probability of. The summation (Greek letter sigma), is taken between 1 and the number of possible outcomes of a system. “This book is the second edition of the classic 1990 text … and inherits much of the structure and all of the virtues of the original. The equation used for entropy information theory in calculus runs as such: H -n i1 P (x i )log b P (x i) H is the variable used for entropy. Significant material not covered in other information theory texts includes stationary/sliding-block codes, a geometric view of information theory provided by process distance measures, and general Shannon coding theorems for asymptotic mean stationary sources, which may be neither ergodic nor stationary, and d-bar continuous channels. New material on the relationships of source coding and rate-constrained simulation or modeling of random processes.New material on the properties of optimal and asymptotically optimal source codes.New material on trading off information and distortion, including the Marton inequality.Expanded treatment of B-processes - processes formed by stationary coding memoryless sources.Expanded discussion of results from ergodic theory relevant to information theory.Expanded treatment of stationary or sliding-block codes and their relations to traditional block codes.About one-third of the book is devoted to Shannon source and channel coding theorems the remainder addresses sources, channels, and codes and on information and distortion measures and their properties. If there is a 100-0 probability that a result will occur, the entropy is 0.This book is an updated version of the information theory classic, first published in 1990. It does not involve information gain because it does not incline towards a specific result more than the other. 15 The Discrete Memoryless Channels (DMC): 1. In the context of a coin flip, with a 50-50 probability, the entropy is the highest value of 1. Information Rate: If the time rate at which X emits symbols is ‘r’ (symbols s), the information rate R of the source is given by R r H(X) b/s (symbols / second) (information bits/ symbol). The information gain is a measure of the probability with which a certain result is expected to happen. This is a term borrowed from physics, which says the amount of disorderliness in the system. It has applications in many areas, including lossless data compression, statistical inference, cryptography, and sometimes in other disciplines as biology, physics or machine learning. Entropy is the amount of information contained in a system. The "average ambiguity" or Hy(x) meaning uncertainty or entropy. It measures the average ambiguity of the received signal." "The conditional entropy Hy(x) will, for convenience, be called the equivocation. Information and its relationship to entropy can be modeled by: R = H(x) - Hy(x) The concept of information entropy was created by mathematician Claude Shannon. Entropy adds a mathematical tool-set to measure the relationship between principles of disorder & uncertainty. More clearly stated, information is an increase in uncertainty or entropy.

In general, the more certain or deterministic the event is, the less information it will contain. It tells how much information there is in an event. Information entropy is a concept from information theory.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed